This year’s Adobe Max 2022 was all about 3D design and mixed reality headsets, but the AI-generated elephant in the room was the rise of text-to-image generators like Dall-E. How does Adobe plan to respond to these revolutionary tools? Slowly and cautiously – but an important feature hidden in the new version of Photoshop suggests the process has begun, according to the keynote.

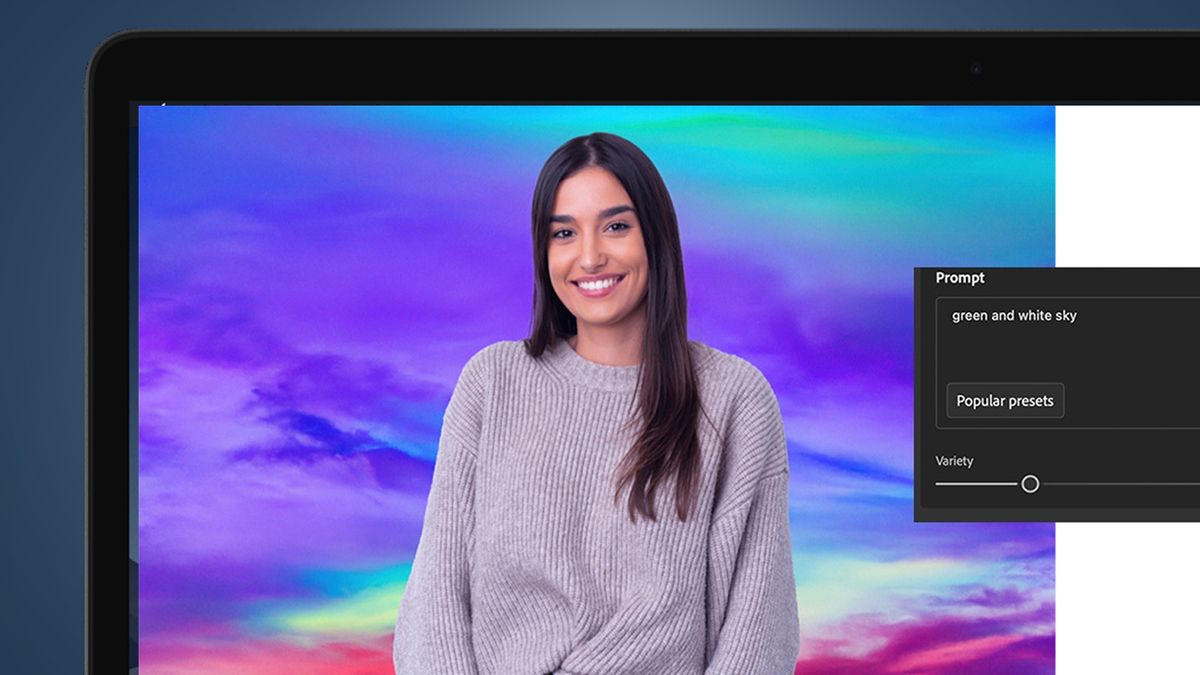

near the end Release Notes (opens in new tab) With the latest Photoshop v24.0 there is a beta feature called “Background Neural Filter”. What does this do? Like Dall-E and Midjourney, it lets you “create unique backgrounds from descriptions.” All you have to do is enter a background, select Create, and choose your favorite result.

Still, it’s a long way from being Adobe’s Dall-E competitor. It’s only available in Photoshop Beta, a testbed separate from the main app, where you can currently only input colors to generate different photo backgrounds, not weird mixtures from the darkest corners you can imagine.

But the “background neural filter” is clear evidence that Adobe is making further forays into AI image generation, however cautiously. Its keynote at Adobe Max showed that it believes this frictionless method of creating visual images is unquestionably the future of Photoshop and Lightroom—once it addresses minor issues like copyright issues and ethical standards.

Creative co-pilot

Adobe didn’t really mention the arrival of “background neural filters” at Adobe Max 2022, but it did lay out where the technology is going eventually.

David Wadhwani, president of Adobe’s digital media business, effectively says the company has the same technology as Dall-E, Stable Diffusion, and Midjourney; it just chooses not to apply it in its apps. “Over the past few years, we’ve been investing more and more in our artificial intelligence engine, Adobe Sensei. I like to call Sensei your creative co-pilot,” Wadhwani said.

“We’re working on new features that will take our core flagship app to a whole new level. Imagine being able to let your creative co-pilot in Photoshop connect objects to objects simply by describing what you want or asking your colleagues Added to the scene – pilot gives you alternative ideas based on what you’ve already built. It’s like magic,” he added. It’s definitely a step further than Photoshop’s sky replacement tool.

He said this while standing in front of an analog version of Photoshop with the Dall-E feature (above). The message is clear – Adobe can now do text-to-image generation at this scale, it just chose not to.

But it’s Wadhwani’s Lightroom example that shows how this technology can be more judiciously integrated into Adobe’s creative applications.

“Imagine if you could combine ‘gen-tech’ with Lightroom. So you could ask Sensei to turn night into day and a sunny photo into a beautiful sunset. Move the shadows or change the weather. It’s all in Today has the latest advances in generative technology,” he explained, nodding unequivocally to Adobe’s new competitor.

So why hold back when someone else steals your AI-generated fries? The official reason, and of course some merit, is that Adobe has a responsibility to ensure that this new power is not used recklessly.

“For those unfamiliar with generative AI, it can simply conjure up an image from a textual description. We’re really excited about what this can do for you all, but we also want to do it thoughtfully This thing,” Wadhwani explained. “We want to do this in a way that protects the rights of creators and supports the needs of creators.”

What does this mean in practice? While it’s still a bit vague, Adobe will be moving more slowly and cautiously than the likes of Dall-E. “This is our promise to you,” Wadhwani told the Adobe Max audience. “We are looking at generative technologies from a creator-centric perspective. We believe AI should augment human creativity, not replace it, and it should benefit creators rather than replace them.”

This partly explains why Adobe has only reached Photoshop’s “background neural filter” so far. But that’s only part of the story.

long game

Despite being a giant in creative applications, Adobe is undoubtedly still very innovative – just check out some projects Adobe Labs (opens in new tab)especially those that can turn real-world objects into 3D digital assets.

But Adobe is also prone to being caught off guard by fast-growing competitors. Tools like Photoshop and Lightroom are built as desktop-first tools, which means Canva has a head start on user-friendly, cloud-based design tools. That’s why Adobe spent $20 billion on Figma last month, more than what Facebook paid for WhatsApp in 2014.

Is the same thing happening with companies like Dall-E and Midjourney? Chances are, because Microsoft just announced that Dall-E 2 will be integrated into its new Designer graphic design app (above), which is part of its 365 productivity suite. Although Adobe has doubts about how quickly this can happen, AI image generators are heading in the mainstream.

Adobe did, however, offer a view on the ethical issues surrounding this fascinating new technology. With the rise of AI-powered image generation, the copyright cloud continues to grow — and understandably one of the founders of the Content Authenticity Initiative (ICA) might refuse to go all out. Generative artificial intelligence.

Nonetheless, the arrival of Adobe Max 2022 and the “Neural Background Filter” shows that AI image generation will undoubtedly become an essential part of Photoshop, Lightroom, and general photo editing – and it may take longer to appear in your most Love Adobe apps.